TextWidget: a text-based UI control

After reading Bret Victor's excellent essay on information design in software, entitled Magic Ink, I started implementing a re-usable javascript version of the text control which he advocates.

Here is the TextWidget demo.

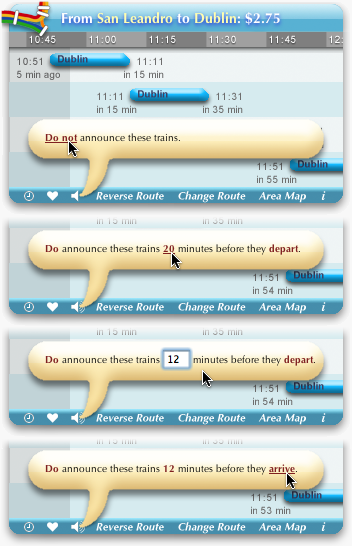

The screenshots below illustrate his original control, which consists mainly of text in natural language. A simple interaction model to manipulate the information: some words can be clicked and doing so changes the sentence or edit some values.

This control represents its state in a readable sentence at all times. This is much more efficient, compact and brain-friendly than common configuration dialogs (think of the recurring meeting dialog in Outlook). The sentence can be tuned incrementally by interacting with any portion which is incorrect.

The result of my project is a javascript library, TextWidget, which let's you easily incorporate this kind of graphical element into your web application.

Check out the TextWidget demo and read on for more details.

Information design

In his essay, Bret argues that most of today's software require too many interactions to get to the information.Instead, more information should be visible at a glance (information density, data to ink ratio), with the right navigation state set by default (based on the context). Generally, the user should get all the information he needs in a single look.

The designer should aim to minimize interaction, tailoring the information to the user's tasks.

The essay advocates the use of more appropriate graphical elements for the desired goals and scenarios. An example would be using a map instead of a drop-down to select a location.

Bret highlights three primitive elements which are very efficient at conveying information: text/language, maps (where) and timelines (when).

You should read the explanation of the design process behind the BART widget, a tool for planning trips on the San Francisco train system. It is a brilliant piece of interface design which illustrates many of the essay's principles.

In particular, it demonstrates how to use text for displaying the selected options and allowing the options to be edited.

This use of text follows the design guidelines that the author promotes to reduce interaction: display the information using proper graphical elements for the domain, use relative navigation to let the user correct the model from the starting point (predicted based on context) to the desired state, and provide a tight interaction loop (immediately showing the effects of the interaction).

Seeing the screencast of the BART widget made me wonder how I would go about coding such an interactive text control.

TextWidget library

The TextWidget javascript library lets you implement a simple text control in HTML simply and declaratively. You can start using the control with some XML and a few lines of javascript.A tree of options, input as XML, models this control. A leaf in this tree can represent a selected option and contains a sentence. A sentence is the representation of the current state of choices and variables.

Segments of a sentence can be interactive. Some keywords in the sentence can be clickable with simple markup such as "<sentence><a onclick='reminder=on'>Do not</a> remind me ... </sentence>".

Clicking on this segment changes the state of the model and refreshes the display.

A sentence can also have some input segments, such as "<sentence>Remind me in <input name='time'/> minutes</sentence>".

Interacting with such segments updates variables of current selected options, without altering the options themselves.

Try the demo to interact with this control, see a more comprehensive example of XML input and experiment with your own.

The code in the demo page and accompanying jsUnit tests shows how easy the API is.

You can then download the TextWidget JS library from Google Code.

Note that this control is accessible, which may not be the case for other controls discussed in the essay, unfortunately.

Limitations and extensibility

The control can be extended for more advanced scenarios, by creating new types of inputs.You could add a specific input type for representing dates (maybe using DateJS?).

You could also improve the editing for numbers. Inline editing, as shown in the screenshots above, would provide a better flow than a modal prompt,

Towards a humane desktop

Aza Raskin also picked up the Magic Ink essay and mentions it in his CHI 2007 presentation (also recorded as a Google TechTalk).

He makes a case that the desktop metaphor based on applications is fundamentally limited and not humane. For example, you may have half a dozen different spell checkers on your system, for different applications, and yet have interfaces which lack spell checking.

Instead of an architecture centered around applications, he recommends one centered around content.

His thoughts on a better desktop interface include: better leverage language, use zoomable UI to achieve high information density, context and spatial navigation, support adding content processing components into the system as services for the entire desktop (spellcheck, image processing, etc.), and provide a consistent way to invoke the desired service on content thru a universal access interface.

Many similar principles are brought up by Tufte in his iPhone review (video). He notices how they are applied consistently throughout the iPhone applications, with a few exceptions.

Interestingly, the architecture of Google's mobile operating system, Android seems to follow the same trend.

As this introduction video explains, Android supports pluggable service providers, an easy way to flow from one application context to the next, and a stack of contexts/applications (which is analog to zooming).

The mobile space seems to be a great experimental ground for new interfaces. I'm excited to see some of these paradigms flow back into the desktop and the web.

PS: in another talk, Aza mentioned the problem of tab overflow and pointed to another of Bret's ideas and prototypes, ScrollTabs.

It's an interesting concept.

Does anyone know of a good article spinning script. Looking to take my articles and PLR articles and spin them.

Steven Walsh